Engage: designing AI into an enterprise SaaS product

I joined Local Measure at an inflection point. The company had an established product in Engage but needed to rebuild it from the ground up for the next stage of the business. I led the design of that rebuild across four years, working directly with the VP of Design and UX, partnering with PM, engineering, and leadership.

Context

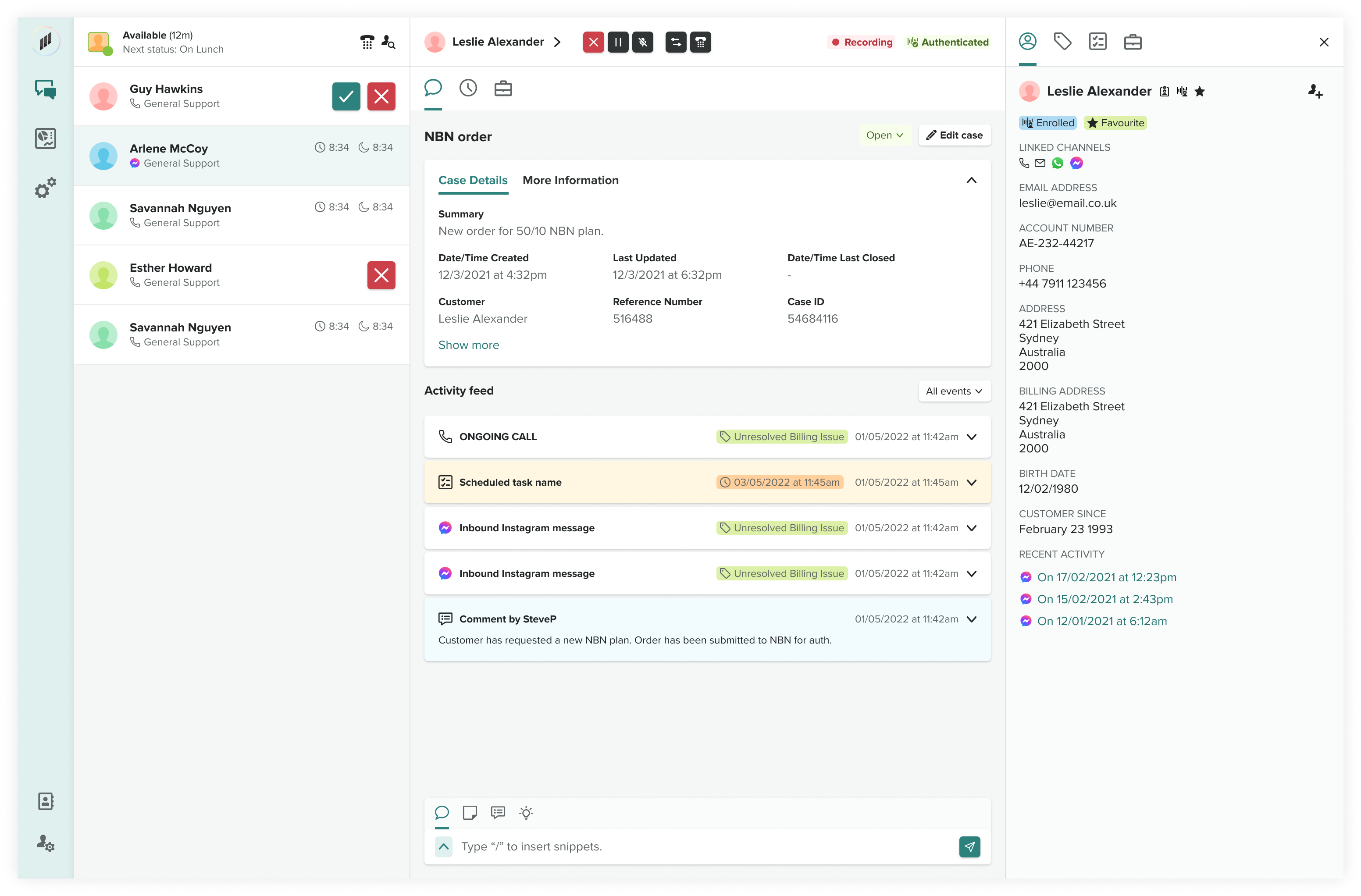

Local Measure is an enterprise contact centre platform. Engage is its core product — the tool call centre agents use to handle customer interactions across voice, chat, messaging, and email. When I joined, Engage as a product was growing rapidly, but needed to be rebuilt to handle scale and feature rollout. Its design and architecture reflected years of iterative feature addition rather than a considered whole.

AI became a significant part of that evolution. Engage shipped AI features before most competitors in the category, and those features became a crucial integrated part of the product rather than a peripheral add-on. Smart Notes was the most visible of these, but it was one of several AI tools we designed and shipped as a suite in the years before the Zendesk acquisition.

How I operated

As lead designer, I was accountable for design across Engage end-to-end. Day-to-day, that meant partnering with the VP of Design and UX on product strategy and roadmap, working closely with PMs and engineering squads on feature delivery, and running customer research directly — including interviews, surveys, and usability testing — to ground the design decisions in real agent needs. I also established a design system that became the single source of truth for the product's visual and interaction language.

Most of what shipped, I designed personally from concept through engineering handover. The operating model was lean: high ownership, close partnership with engineering, and direct access to executive stakeholders. I had significant autonomy to shape the roadmap and push back on direction when the trade-offs weren't right.

Featured work: Smart Notes

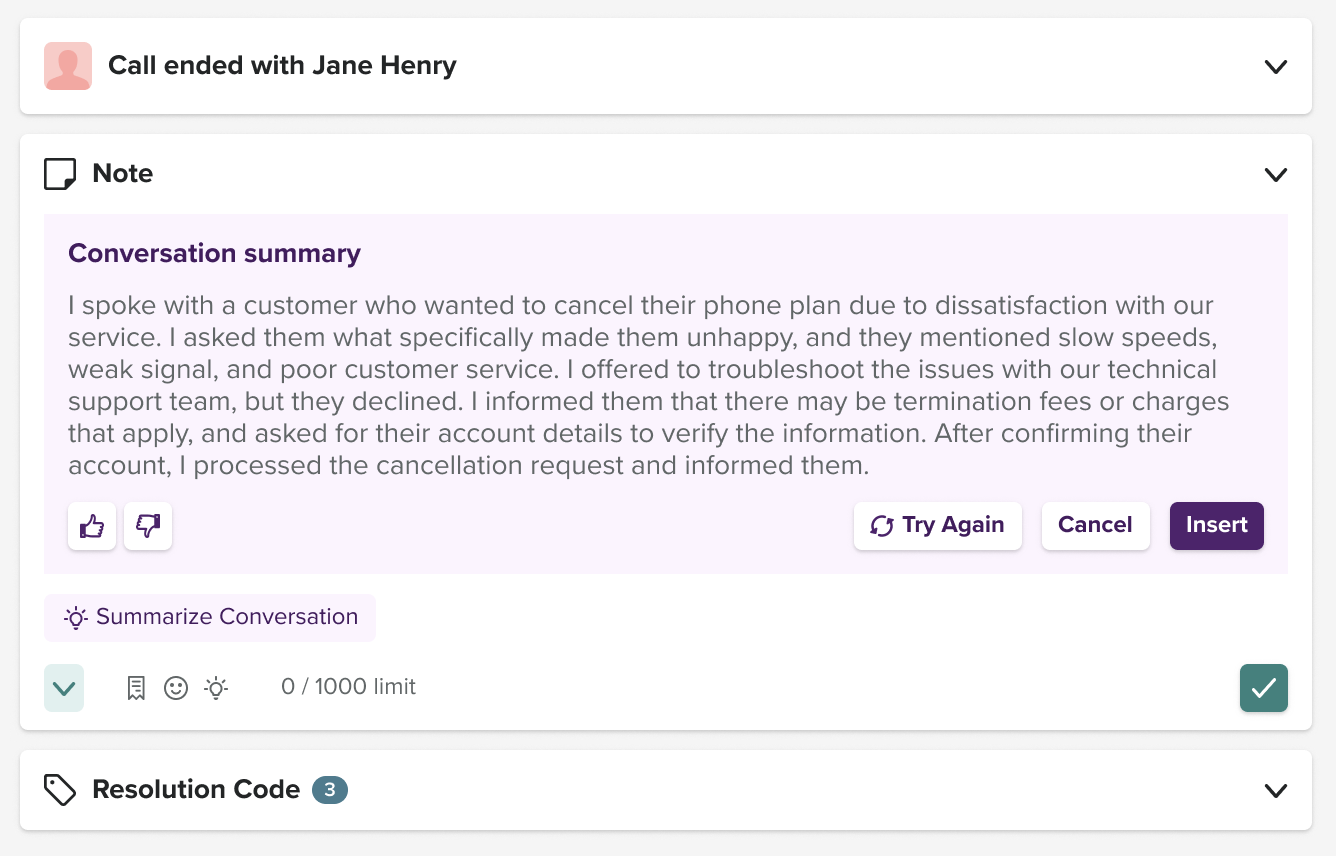

Smart Notes is an AI feature inside Engage that writes a conversation summary for the agent at the end of every customer interaction, something agents previously did manually, costing minutes per contact at scale.

The feature shipped at a moment when AI in enterprise SaaS was genuinely new. Most design patterns for working with probabilistic AI output hadn't been established yet. I had to design for a paradigm that was being written in real time, which meant making a series of judgement calls about how AI should show up in the product, how users should know when it was being used, and how much control to give them over it.

Making AI presence explicit

Agents needed to know, at a glance, when a piece of the interface had been generated by AI versus written by a human or sourced from the system. I designed a consistent visual language for AI-generated content, distinct enough to be unmistakable and subtle enough not to undermine trust. This became a system-level pattern that other AI features in the product inherited.

Enabling feedback as a first-class interaction

AI output is only as good as the feedback loop improving it. I designed feedback controls directly into the Smart Notes interface so agents could flag bad summaries inline, without disrupting their workflow. The goal was to make it cheaper for users to give feedback than to ignore a bad result.

Holding the line on customisation

Customer research surfaced a strong signal: users wanted to customise and fine-tune the AI summaries themselves, with different templates, tone adjustments, and field-level control. The instinct was to say yes, because customisation is how enterprise products typically win. I argued against it.

Giving users deep customisation over AI output creates two problems: it puts the quality burden on the customer (who is less equipped to judge good AI output than we are), and it fragments the feedback signal we need to improve the underlying model. We shipped with constrained customisation — a small set of well-reasoned options — and kept the ability to expand later as the AI matured. This was one of the harder arguments of the project, and the right call in hindsight.

What shipped and what it did

Smart Notes shipped and is in production across Local Measure's customer base. Outcomes come from customer interviews, surveys, and internal metrics across specific enterprise deployments:

Range beyond Smart Notes

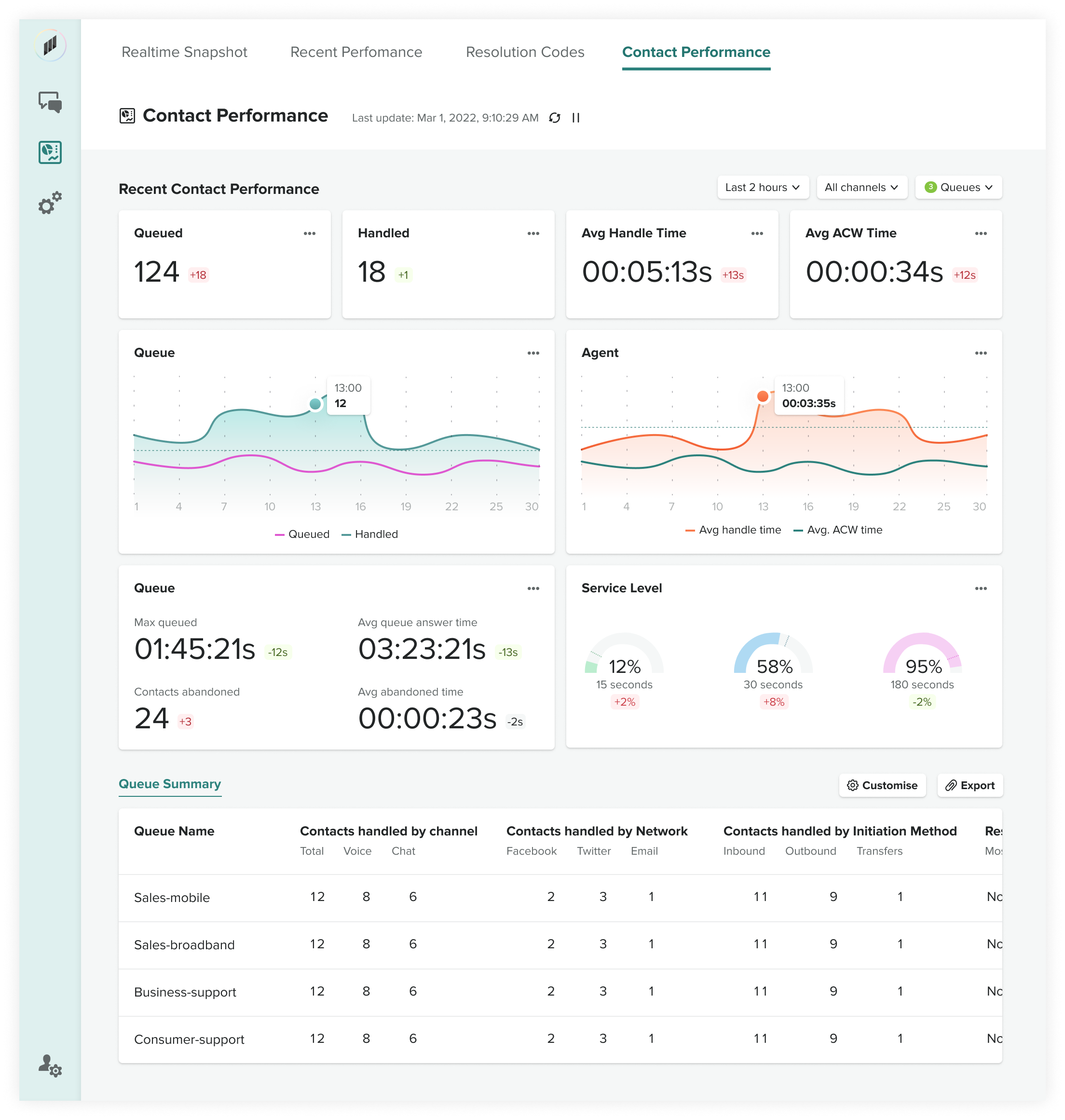

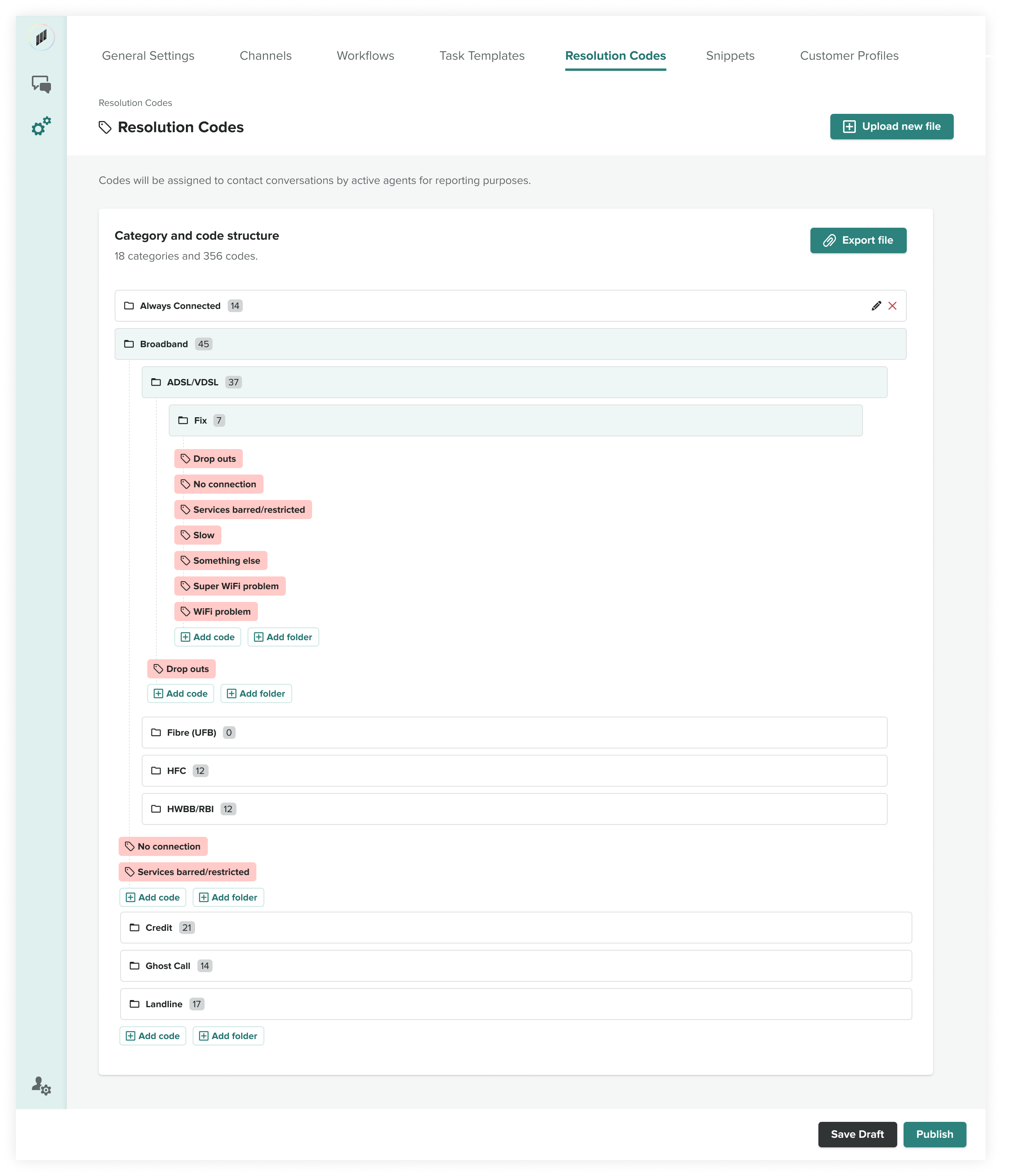

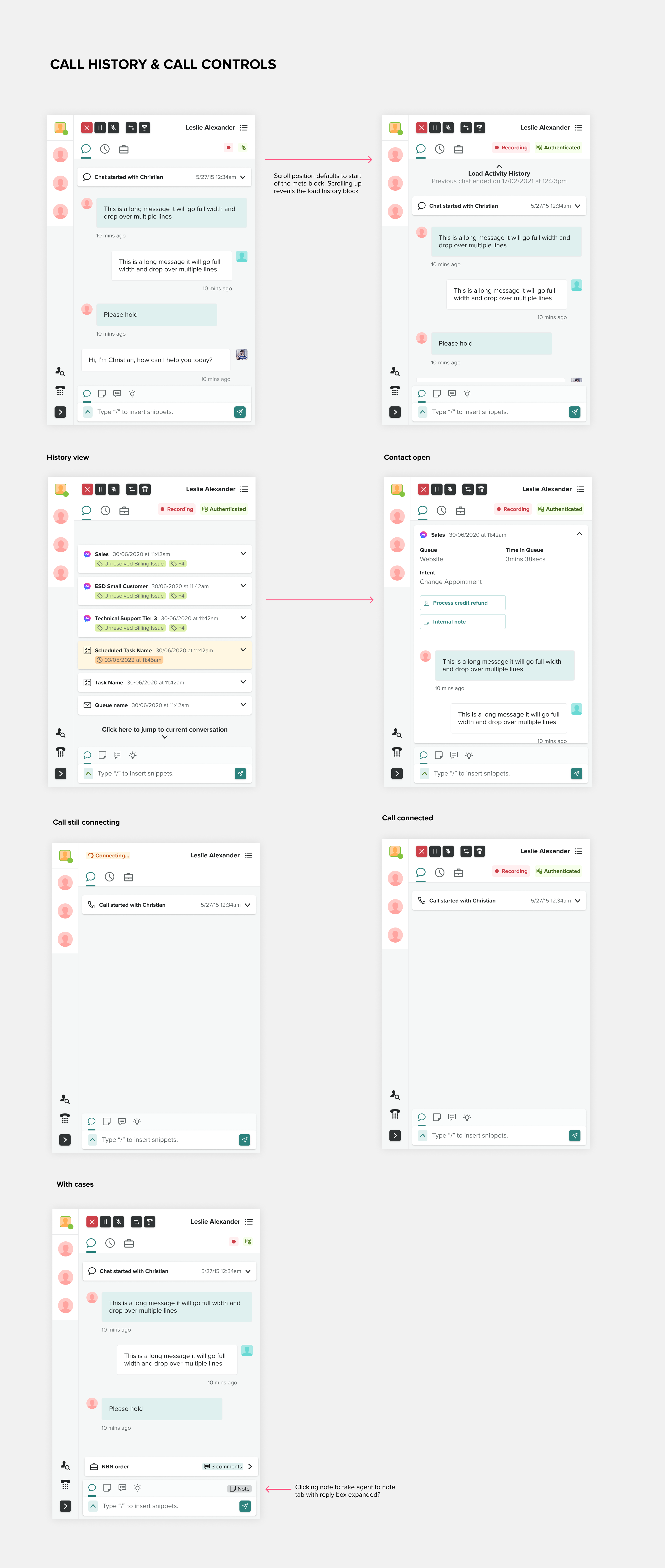

Smart Notes is the featured AI work, but it's one piece of a four-year scope. I designed the broader agent workspace, administrative configuration and workflow tools, reporting dashboards for supervisors and managers, integrations with partner platforms including Salesforce, and the product's design system. The screens below give a sense of the range: dashboards, admin flows, workflow configuration, and system-level pattern work, all shipped as part of the Engage redesign.

What I've taken from the work

Designing AI into enterprise SaaS in its early days was a crash course in judgement under uncertainty. The design patterns didn't exist yet. You had to make them, test them, and be willing to be wrong. The hardest calls weren't the obvious ones — they were the quiet ones: how much control to give users, how visible to make the AI, how to handle the gap between what customers asked for and what they actually needed.

Four years of end-to-end ownership taught me that lead design work at a small company is as much about the arguments you have as the designs you ship.